NOTES BY ANDREW LILES

The machine this ‘music’ is made on is the Open NSynth Super. I call mine the A.U.M.M – the Artificially Unintelligent Monster Maschine. Basically, you can add one or more instruments to each corner of the machine, and where one places one’s finger on the X Y pad in the centre determines the amount of blending between the instruments.

For example, you could assign a piano sound in the left corner and guitar in the right corner. If you placed your finger on the left corner you would hear only piano. If you were to place your finger on the right corner you would hear only guitar. If you were to place your finger in-between the left and right you would hear half guitar and half piano, thus creating a hybrid instrument – the Piatar if you will. The use of Artificial Intelligence (AI), through very intense and data heavy calculations, determines what it ‘thinks’ the Piatar should sound like.

A traditional composer would, I presume, have instruments tuned to be in harmony with one another; synced, tuned, clean and complimentary sounds.

I’m not a traditional composer, arguably not a composer at all, so I decided that each note would be a different sound. I chose 128/256 random and not so random sounds – all kinds of tuned sounds and sound effects. The AI would in turn process these sounds in such a way so that I could theoretically create an ‘illogical’ instrument with no musical scale or distinct hybrid ‘instrument’ but many different permutations, each note played on the keyboard being its own instrument.

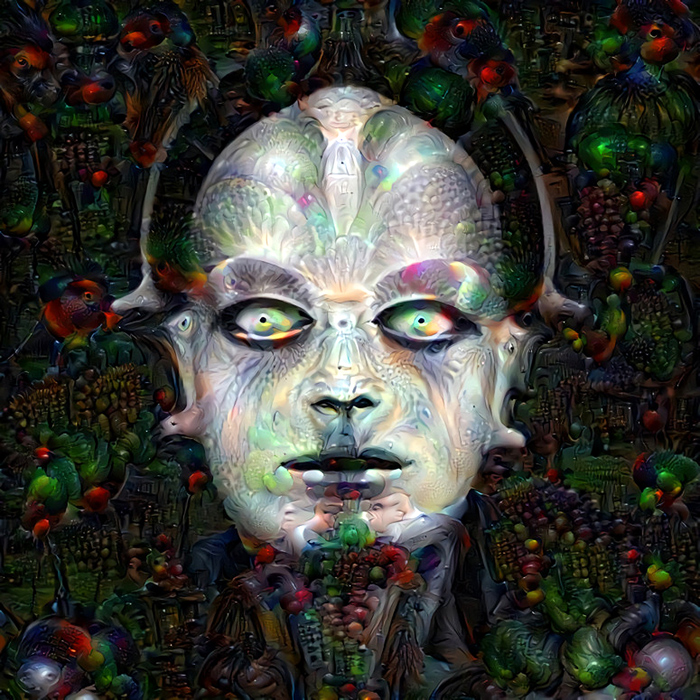

I wanted to design a machine that had no logic and that would not create a formal musical structure. I wanted the AI to invent tones that had never been heard before. A universe of sound reinvented by the computer’s dreams and imagination.

The sounds you hear on this recording are made entirely by the NSynth machine learning algorithm. I have used a sparing amount of EQ, stereo effects, reverb and echo to smooth out the sounds. The music is played by me, and the raw sounds fed into NSynth are chosen by me, but other than that, all the sounds you are hearing come from the imagination of a computer.

AUDIO QUALITY

Due to the limitations and format of the files that are used for the NSynth, this recording may, in part, lack some fidelity.

TECHNICAL NOTES BY ANDREW BACK

To quote its creators, ‘NSynth uses deep neural networks to generate sounds at the level of individual samples. Learning directly from data, NSynth provides artists with intuitive control over timbre and dynamics, and the ability to explore new sounds that would be difficult or impossible to produce with a hand-tuned synthesizer.’

The NSynth algorithm is far too computationally intensive to be run in real-time or indeed on anything but a reasonably powerful computer. In this case a workstation equipped with 2x Nvidia GTX 1080 Ti GPUs, together capable of over 22 trillion calculations per second, was used.

With 4x instruments per corner and 16 notes/pitches each, it took just short of 3 days for the algorithm to run, in total generating over 10,000 audio files for the many different combinations of input sounds and the interpolation points between them, and pitch.

Once the artificially generated sounds had been created they were loaded onto the Open NSynth Super, which provides a physical interface for navigating the sounds that exist in between the sounds input to NSynth.

WITH THANKS

This project would not have been possible without the talents of Andrew Back who introduced me to, and built me, an NSynth Super. I am eternally grateful for his technical skills and the time he has dedicated to this experiment.

Andrew Back has been making and breaking electronics for as long as he can remember, he runs an engineering consultancy, and is an occasional artist – andrewback.net